カメラ

デジタルカメラのインターフェース

本ページはイメージングリソースガイドのセクション 10.1です

画像技術の進歩に伴い、カメラの種類やインターフェースは、多くのアプリケーションのニーズを満たすために絶えず進化しています。半導体、電子機器、バイオテクノロジー、組立、製造など、検査と解析が重要なマシンビジョンアプリケーションでは、目の前のタスクに最適なカメラシステムを使用することが、最高の画質を達成するために非常に重要です。デジタルインターフェース、電源、ソフトウェアなどのカメラパラメータを理解することは、画像処理の初心者から上級者になるための絶好の機会となります。

デジタルカメラには、アプリケーション要件に依存して様々な種類のインターフェースを選ぶことができます。2種類のUSB規格を始めとする一部のフォーマットは、1本のケーブルで映像信号出力と電源を供給できるため、セットアップを大幅に単純化することができます。他のフォーマットの場合、電源供給を別に行わなくてはならない場合もありますが、より高いデータ転送レートを実現するなど別のメリットがあったりもします。Table 1 は、異なるデジタルカメラインターフェースの比較です。

| デジタルカメラのインターフェースの比較 | ||||||

|---|---|---|---|---|---|---|

| デジタルインターフェース (右の各イラストはそれぞれ原寸とは異なる倍率で描かれています) |

|

|

|

|

|

|

| USB 3.1 | GigE (PoE) | 5 GigE (PoE) | 10 GigE (PoE) | CoaXPress | Camera Link® | |

| データ転送レート: | 5Gb/s | 1000 Mb/s | 5Gb/s | 10Gb/s | ~12.5Gb/s | ~6.8Gb/s |

| 最大ケーブル長: | 3m (推奨) | 100 m | 100 m | 100 m | >100m at 3.125Gb/s | 10 m |

| 接続デバイス数: | ~127台 | 無制限 | 無制限 | 無制限 | 無制限 | 1 |

| 端子: | USB 3.1 マイクロ B/USB-C | RJ45 / Cat5e or 6 | RJ45 / Cat5e or 6 | Cat7または光ケーブル | RG59 / RG6 / RG11 | 26ピン |

| キャプチャーボード: | オプション | 不要 | 不要 | 不要 | オプション | 要 |

Table 1: 代表的なデジタルカメラインターフェースの比較

USB (ユニバーサル・シリアル・バス)

USB 3.1 Gen 1は、以前はUSB 3.0 と呼ばれていましたが、速度や利便性が高く、コンピューターに広く普及していることで人気が高いインターフェイスです。実現可能な最大速度と利便性は、バスの転送速度が5 Gb/sで固定されているめ、USB周辺機器の接続数に依存します。USB3 Visionでは、カメラ制御レジスタはEMVA GenICam規格に基づきます。USB3 Vision規格は、下位互換性のコンピューター規格に一致しませんが、一部のUSB 3.1 Gen 1カメラには下位互換性があり、低速のUSB 2.0 (480Mb/s)で動作します。マシンビジョンカメラ業界で用いられる最も一般的なUSB 3.1 コネクタは、USB 3.1 Micro B コネクタです。徐々に市場に投入されているのが、将来に向けて設計された接続タイプのUSB-C (USB Type C) です。同タイプの最高速度は、シングルとデュアルバンドでそれぞれ10 Gb/s と 20 Gb/s になります。加えて、このコネクタの設置面積は小さく、リバーシブルです。現在USB-Cを使用しているケーブルやカメラは、依然としてUSB 3.1 Gen 1 のデータ転送速度に制限されますが、業界では代替の高速インターフェイスとしてUSB 3.1 Gen 2 を採用するため、新たなコネクターが必要になってきます。

GigE (ギガビット・イーサネット)

ギガビット・イーサネットプロトコルに準拠した高速カメラインターフェースのGigEは、標準的なCat 5eやCat 6ケーブルを使用します。スイッチやハブ、リピーターといった標準的なイーサネットハードウェアを複数台のカメラに用いることができますが、ピアツーピア接続 (カメラからカードへの直接接続)を行わない場合、全体的なバンド幅を考慮していく必要があります。GigE Visionの場合、カメラの制御レジスタはEMVAのGenICam規格EMVAのGenICam規格に準拠しています。一部のカメラに装備されているリンクアグリゲーション (LAG)は、複数のイーサネットポートをパラレルで使用し、データ転送レートを増やすことを可能にします。また一部カメラにはネットワーク用のPrecision Time Protocol (PTP)を用いることができ、同一ネットワーク上に接続した複数台のカメラのクロックを同期化して、各カメラの露光 タイミングの関係を固定します。5 GigE と 10 GigE は、GigE インターフェースの新たなバージョンで、そのデータ転送速度はそれぞれ5 Gb/s と 10 Gb/s になります。

CoaXPress

CoaXPressは、高速フレームレートを必要とする高解像度マシンビジョンアプリケーションでの使用に向けたプラグ・アンド・プレイ操作の高速デジタルインターフェイスです。同軸ケーブルを使用し、複数のケーブルに拡張可能で、各ケーブルは12.5 Gb/s までに対応し、各ケーブルは公称24Vで最大13Wの電力を提供できます。この拡張性のため、CoaXPressではケーブル長の最大値が設定されておらず、帯域幅が高いほど最大ケーブル長は短くなります。

Camera Link®

Camera Link®は、マシンビジョンアプリケーション用に特別に開発された高速シリアルインターフェース規格です。使用の際は、専用のキャプチャーカードが必要になり、カメラ駆動用の電源も別に用意する必要があります (但しPoCLを除く)。ケーブル1本で2.04Gb/sの映像信号のデータ転送が可能です。なおフル・コンフィギュレーションのデータ通信に対応するには、LV D S方式で独立したアイソクロナスシリアル通信チャネルを持つ特別なケーブルが必要になります。デュアル出力 (フル・コンフィギュレーション)の場合、独立したカメラパラメータの送信/受信ラインを可能にし、より高いデータ転送レート (6.8Gb/s)を解放するため、極めて高速なアプリケーションが可能になります。

キャプチャーボード

画像処理は通常コンピュータを使用します。これは、例えばアナログカメラを用いる際はデジタルインターフェースが必要であることを意味します。キャプチャーボードは、アナログカメラの映像出力信号をコンピュータ内に取り込み、使用者が同カメラの映像で画像解析を行うことを可能にします。アナログ信号 (NTSC, Y/C, PAL, CCIR)入力用のキャプチャーボードは、同信号をデジタル処理するためのA/Dコンバータを搭載しており、更なる画像処理が行えます。使用者は、画像を取り込み、加工や印刷用にそのデータを保存することができます。基本的操作が行える画像取り込み用のソフトウェアはキャプチャーボードに付属しているため、データ保存や画像の確認がすぐに行えます。

キャプチャーボードと似たような製品で、キャプチャーカードと呼ばれているものがあります。キャプチャーカードは、コンピュータに装備していない信号入力ポートを持つデジタルカメラインターフェースに使用し、同インターフェースから出力される信号をコンピュータ内に取り込む際に必要になります。

ノートパソコンとカメラ

デジタルカメラインターフェースの多くがノートパソコンに接続できますが、高性能かつ高速なイメージングアプリケーションに対して標準的なノートパソコンを用いるのは避けるべきです。ノートパソコンのデータバスが、転送速度を完全にサポートしないことがあり、高性能カメラや同ソフトウェアの使用メリットをフルに活用できない場合があります。特に、大抵のノートパソコンに標準装備されているイーサネット・ネットワーク用のインターフェースカードは、デスクトップパソコン用に市販されるPCIeカードよりも動作性能が遥かに低レベルです。

カメラへの電源供給

本ページはイメージングリソースガイドのセクション 10.2です

多くのカメラインターフェースは、カメラ駆動用の電源供給を1本の信号用ケーブルから遠隔に行えます (例えばUSBやPoEなど)。Power over Ethernet (PoE) は、一部のGigEカメラには採用されていますが、全てではありません。このケースに該当しない場合は、電源をヒロセ製コネクター (トリガー入力やI/Oも可能)、或いは標準的なAC/DC ジャックコネクターを通してカメラに電源を供給するのが一般的です。カメラがカードやポートから電源供給される場合であっても、電源を別に接続することは利点につながることがあります。以下は、GigEカメラに電源供給するための3つの方法です:

GPIO (General-Purpose Input/Output) 電源ケーブル接続

GigEケーブルを使用してカメラをコンピューターに接続します。次に、GPIO電源ケーブル (ヒロセ製コネクターを採用するケーブルが一般的) をコンセントに差し込み、カメラの電源ポートに接続します。カメラによって、6ピンや12ピンなど、ピンの数が異なるGPIOケーブルが必要になります。PoE対応ではないカメラの場合は、電源ケーブルがカメラに電力を供給する唯一の方法です。

PoEインジェクター

PoEインジェクターは、GigEケーブルを介してカメラに電力を供給することができます。これは、工場の現場や屋外使用など、スペースの制限によってカメラが専用の電源を使用できない時に重要になります。この場合、カメラやコンピューター間の標準ケーブルがあるケーブルラインに沿った場所にインジェクターが追加されます。しかしながら、すべてのGigEカメラがPoEに対応しているわけではありません。PoEインジェクターに電源ケーブルを接続し、その IN ポートをGigEケーブルを用いてコンピューターに接続します。次にインジェクターの OUT ポートを別のGigEケーブルを用いてカメラに接続します。

PoE ネットワーク インターフェース カード (PoE NIC)

PoE NICは、コンピュータインターフェースを用いて端末からカメラに電力を供給しながら、カメラを安全なファイバーネットワークに接続できるようにします。PoE NICは、必要となるコンセントやケーブルの量も削減します。PoEカードをコンピューターのマザーボードの空いているスロットに差し込み、内部電源接続に接続します。次に、GigEケーブルを用いてカードのPoEポートの1つとカメラを接続します。

カメラソフトウェア

本ページはイメージングリソースガイドのセクション 10.3です

一般に、イメージング用ソフトウェアには、各カメラ専用のソフトウェア開発キット (Software Development Kits; SDK)かサードパーティソフトウェアの2つの選択肢があります。SDKには、使用者が独自のプログラムを開発するためのコードライブラリを集録したアプリケーションプログラムインターフェースが含まれており、また画像の単純な確認やコード入力を必要としない画像取得プログラム、またカメラの単純な機能設定を可能にします。サードパーティソフトウェアの場合、機能性を確保するために、カメラ規格 (GenICam, DCAM, USB3 Vision, GigE Vision) に準拠しているかが重要です。NI LabVIEW™, MATLAB®, OpenCV.Oftenを始めとするサードパーティソフトウェアは、複数台のカメラを動かし、複数の異なるインターフェースに対応しますが、機能性の確保は使用者自身の裁量に委ねられています。

センサー

本ページはイメージングリソースガイドのセクション 10.4です

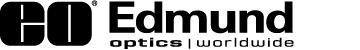

センサーサイズ

カメラセンサーの有効エリアのサイズは、システムの実視野 (Field of View; FOV)サイズと結像倍率(Primary Magnification; PMAG)を決定する上で重要です。イメージングレンズの倍率を一定にした場合、センサーサイズが大きくなるほど、得られる実視野のサイズも大きくなります。 Figure 1とTable 2に、市場に流通する標準的なエリアスキャンカメラのセンサーサイズを紹介します。「何インチ」や「何型」と呼ばれるセンサーサイズの大きさの表現は、テレビ放送用の撮像素子として当初使われたビジコンの真空管の時代まで遡り、現在のセンサーの実寸とは一致していません。しかしながら、これらのセンサーサイズの多くは、4:3の縦横アスペクト比を現在でも維持しています。

イメージングアプリケーションにおいて時折起こる問題の一つに、レンズが対応できるセンサーサイズの問題があります。センサーサイズがレンズデザインよりも大きい場合、口径食 (レンズの縁側を通る光線の量が少なくなる現象)によって画像の周辺部に向かって像が次第に暗くなり、画質が低下します。また場合によって、トンネル効果と呼ばれる現象が発生し、画像の縁が真っ黒になります。センサーサイズがレンズデザインよりも小さい場合は、この口径食の問題は発生しません。

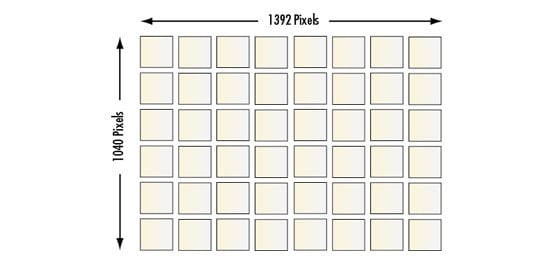

CCD vs. CMOS

CCD (Charge Coupled Device) とCMOS (Complementary Metal-Oxide Semiconductor) センサー技術は、光を電気信号に変換する原理が異なります。CCD (電荷結合素子) センサーの場合、センサーの各画素で光電変換された信号電荷は、後段の出力アンプに転送された後、一括してアナログ電圧信号に変換されます。対するCMOSセンサーは、各画素が信号電荷をアナログ電圧信号に変換します。CMOSのこの原理は、画素毎に持つアンプ段の性能のばらつきの問題によって、センサー全体としては不均質な画像 「( 固定ノイズ」とも呼ばれます) となって現れる傾向があります。

ここ数年間のCMOSセンサー技術の進歩は、低照度環境下における不均一性の問題を大きく改善し、数多くのアプリケーションにおいて、ハイエンドCMOSセンサーが比較対象のCCDと比べても遜色のないレベルになっています。加えて、CMOSセンサーはCCDよりも低消費電力で、サイズ的な制約を受けるアプリケーションに対しても実用的になっています。しかしながら、おおよそ3ミクロン以下の画素サイズを有するローエンドCMOSセンサーの場合は、画質の点でCCDのそれよりもまだ劣ります。両センサーの性能的違いをTable 3に紹介します。

Figure 1: 標準的カメラセンサーのセンサーサイズ

| ピクセルサイズ別のカメラ解像度 | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| ピクセルサイズ [µm] | 9.9 | 7.4 | 5.86 | 5.5 | 4.54 | 3.69 | 3.45 | 2.2 | 1.67 |

| 解像度 $ \left[ \tfrac{\text{lp}}{\text{mm}} \right] $ | 50.5 | 67.6 | 85.3 | 90.9 | 110.1 | 135.5 | 144.9 | 227.3 | 299.4 |

| 代表的な½型センサー [MP] | 0.31 | 0.56 | 0.89 | 1.02 | 1.49 | 2.26 | 2.58 | 6.35 | 11.02 |

Table 2: 画素サイズ毎のカメラ解像度

| CCD vs. CMOS イメージセンサー | |||||

|---|---|---|---|---|---|

| CCD | CMOS | CCD | CMOS | ||

| ピクセル信号: | 電荷 | 電圧 | 均一性: | 高 | 中 |

| チップ信号: | アナログ出力 | デジタル出力 | 解像度: | 低~高 | 低~高 |

| FILLファクター: | 高 | 中 | スピード: | 中~高 | 高 |

| 感度: | 中 | 中~高 | 消費電力: | 中~高 | 低 |

| ノイズレベル: | 低 | 低~高 | 複雑性: | 低 | 中 |

| ダイナミックレンジ: | 高 | 中~高 | コスト: | 中 | 低 |

Table 3: CCD vs CMOSセンサー

波長的特性

本ページはイメージングリソースガイドのセクション 10.5です

アプリケーション要求に応じ、カメラを用いて色を再現するかしないかの有益性が変わってきます。白黒カメラ、単板式カラーカメラ、3板式カラーカメラの特徴比較をTable 4に示します。より詳細な解説は、次のセクションをご覧ください。

| 白黒カメラ vs. カラーカメラ | ||

|---|---|---|

| 白黒 | カラー (単板式) | カラー (3板式) |

| 濃淡画像 (グレースケール) を出力 | 一般にベイヤータイプのRGBモザイクフィルターを使用 | プリズムにより、入射光を光の3原色 (R, G, B)に分解 |

| 解像度は、単板式カラーカメラよりも通常10%以上高い | 解像度が低い (色を認識するのにより多くの画素を要する) | 高価格 (R, G, B各色用に3枚の撮像素子を使用) |

| S/Nがより高く、より高コントラスト | カラー画像の解像度は、比較対象の単板式よりも高い | |

| 最低被写体照度がより低く、高感度 | 低照度に対する感度で劣る | |

| 使用できるカメラレンズに制限が多い | ||

Table 4: 白黒カメラ、単板式カラーカメラ、3板式カラーカメラの比較

白黒カメラ

撮像素子にシリコンセンサーを用いたCCDやCMOSイメージセンサーは、使用可能な波長領域は通常400~1000nm程度ですが、感度自体はおおよそ350~1050nm内にあります。代表的な白黒センサーの分光感度曲線をFigure 2に紹介します。なお高品質のカラーカメラや白黒カメラの中には、可視スペクトルでの撮像用に、赤外カットフィルター (IRカットフィルター)を装着しているものがあります。

Figure 2: 代表的な白黒CCDカメラの分光感度特性

カラーカメラ

光電効果で機能する固体撮像素子は、何らかの補足手段を講じない限り、色を識別することはできません。カラーCCDカメラには、単板式と3板式の2種類があります。単板式カラーCCDカメラは、一般的に用いられるカラーカメラで、低コストソリューションを提供します。モザイクフィルター (ベイヤー方式など)を用いることで、センサーの各画素が光の特定波長だけに感度を持つようアレンジされています。各画素の受光後、RGB信号から真の色情報を引き出す“デベイヤー”アルゴリズムを用いることで、ソフト上でカラー画像を形成しています。モザイクフィルターの形式から、カラー画像の形成にはより多くの画素が必要となるため、単板式カラーは同じ画素数を有する白黒カメラに比べて解像度の点で劣ります。3板式カラーCCD (3CCD)カメラは、この解像度の問題を解消するためにデザインされています。センサー手前にプリズム光学系を配置し、RGBの各色が異なるセンサーチップに入射するようアレンジされています。3CCDカメラは、通常極めて高い解像度とより正確な色の再現を可能にしますが、感度が低くなり、コストが高くなるのが難点です。

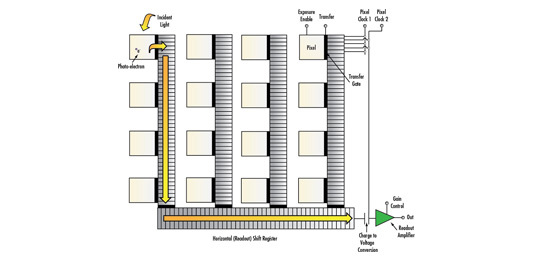

フレームレートとシャッタースピード

フレームレートは、1秒当たりのフルフレーム数 (駒数)の大きさを表します。高速アプリケーションの場合、ビジョンシステムの実視野内を物体が高速で移動するため、物体の画像をより多く取得するためにフレームレートの高いカメラを選定するのが効果的です。シャッタースピードは、センサーの露光時間の逆数に一致します。露光時間は、センサーで集めることのできる入射光量を制御します。カメラのブルーミング現象 (過剰露光によって生じる)は、照明の光量を減らすか、シャッタースピードを高くする (露光時間を短くする)ことで調節することができます。

システムの最大フレームレートは、センサーの読み出し速度やインターフェースのデータ転送レート、及び画素数 (1フレーム辺りのデータ転送量)に依存します。カメラは、センサーをビニングして解像度を下げるか、関心領域 (AOI)を設定することで高いフレームレートでも作動します。デジタルカメラの場合、露光時間はサブマイクロ秒から数分の間でシャッタースピードを設定できます。なお露光時間を長く設定する場合は、CMOSに比べて暗電流やノイズの少ないCCDカメラが一般的に実用に耐えます。

電子シャッター: グローバル vs. ローリング

メカニカルシャッターに類似するグローバルシャッターは、全ての画素が同時に露光/サンプリングされ、その後の読み出しは順番に行われます。受光の始まりと終わりのタイミングが全ての画素間で全く同じです。対するローリングシャッターの場合、露光、サンプリング、読み出しのタイミングの全てが順番に行われるため、画像のライン毎にタイミングが少しずつ異なる結果となります。移動物体の画像をローリングシャッターで撮ると歪んだ画像になります。この現象は、画像の全ラインが露光しているタイミングでストロボ光をトリガーすることで、問題を最小化することができます。なおこの現象は、低速移動物体を撮像する場合は問題になりません。CMOSセンサーにグローバルシャッターを導入する場合、標準的なローリングシャッターモデルに比べてセンサーのアーキテクチャが複雑になります。そのため、全てのCMOSセンサーでグローバルシャッターの導入は行われていません。グローバルシャッターとローリングシャッターによる比較画像をFigure 3に紹介します。

Figure 3: 移動する電子回路基板 (A) の画像比較: グローバルシャッターで撮った画像 (B) とローリングシャッターで撮った画像 (C)

グローバルシャッターやローリングシャッターとは対照的に、非同期シャッターは、各画素のトリガー露光に関連しています。カメラ自体は画像を取得する準備がいつでもできていますが、外部トリガー信号を受信するまで各画素は露光されません。これは、シャッターの内部トリガーと考えられる通常の一定フレームレートとは反します。

ゲイン、ガンマ、関心領域(AOI)など、デジタルカメラとセンサーの設定の基本については次の情報をご覧ください Imaging Electronics 101: Basics of Digital Camera Settings for Improved Imaging Results.

エリアスキャン vs. ラインスキャンカメラ

本ページはイメージングリソースガイドのセクション 10.6です

システム導入者は、アプリケーション要求に応じて、エリアスキャンカメラかラインスキャンカメラの選択を行わなければなりません。エリアスキャンカメラの場合、イメージングレンズはセンサーアレイ上に物体の像を結び、センサー中の各画素で捉えた情報全てを1コマの画像として再構築します(Figure 4a参照)。画像が素早く動かない場合や、物体が極端に大きくない場合に効果的です。対するラインスキャンカメラは、各画素がライン状にのみ配列しており、物体がカメラ越しに移動すると、ラインで捉えた画像をソフトウェアで再構築しています (Figure 4b参照)。

Figure 4: エリアスキャン (左)とラインスキャン (右)の図解

エリアスキャンセンサーの最も高い解像度が水平方向で4,000画素程度なのに対し、ラインスキャンセンサーの場合は16,000画素以上になることもめずらしくありません。しかしながら、ラインスキャンカメラの場合、良質な画想を構築するために物体がカメラに対して正確に移動しなければならず、システムインテグレーションを難しくします。エリアスキャンカメラとラインスキャンカメラの各々の特徴をTable 5に紹介します。

| デジタルカメラフォーマット | ||

|---|---|---|

| エリアスキャン | ラインスキャン | |

| 一般に4 (H):3 (V)のアスペクト比 | イメージセンサーが直線配列 (エリア配列ではない) | |

| 数百fpsまでの高速移動物体の撮像に最適 | 高速移動物体の撮像に最適 (フレームレートは最大100kHzまで) | |

| 静止した物体や低速移動物体の撮像に最適 | 一時に一行のみの画像を形成 | |

| 幅広いアプリケーションに適用 | センサー下を通過する動きのある物体の撮像に | |

| システムのセットアップが容易 | 幅の広い物体 (被写体)の画像取込みに最適 | |

| ラインスキャンよりも低価格 | 構成機器間の位置的アライメントやタイミングに特別な時間を要する | |

| 複雑なシステムインテグレーション (但し照明方法は単純) | ||

Table 5: デジタルカメラフォーマットの比較: エリアスキャンとラインスキャン

| カメラマウント (レンズマウント) | ||||

|---|---|---|---|---|

| Cマウント | CSマウント | TFLマウント | Fマウント | 他の共通的なカメラマウント |

| ねじ込み式マウント | ねじ込み式マウント | ねじ込み式マウント | ニコン・バヨネットマウント (はめ込み式) | M12 x 0.5 (Sマウント) |

| 1-32UNF (M25.4 x 0.79) | 1-32UNF (M25.4 x 0.79) | M35 x 0.5 | 大判センサーカメラへ使用 | M42 x 1.0 |

| フランジバック距離は17.526mm (空気換算長ベース) | フランジバック距離は12.5mm (空気換算長ベース) | フランジバック距離は17.526mm (空気換算長ベース) | フランジバック距離は46.5mm (空気換算長ベース) | M72 x 1.0 |

| 産業用カメラでは最も共通に利用されるマウント | CSマウントアダプター (#03-618)使用により、Cマウントレンズも使用可能 | 4⁄3型からAPS-Cまでのセンサーフォーマットに最適 | 中型サイズのラインスキャンや35mm判センサー用途に最適 | |

| 一部の短焦点レンズは使用できない | 短焦点レンズ/バリフォーカルレンズに共通して採用 | |||

前のセクション

前のセクション

もしくは 現地オフィス一覧をご覧ください

クイック見積りツール

商品コードを入力して開始しましょう

Copyright 2023, エドモンド・オプティクス・ジャパン株式会社

[東京オフィス] 〒113-0021 東京都文京区本駒込2-29-24 パシフィックスクエア千石 4F

[秋田工場] 〒012-0801 秋田県湯沢市岩崎字壇ノ上3番地

The FUTURE Depends On Optics®